How to Measure Your AI Visibility (And Why Most Methods Fail)

Why Can't You Just Use Google Rankings to Measure AI Visibility?

Because AI systems don't follow Google's playbook. Data across 18,000+ queries shows only 13-25% overlap between Google's top results and what AI platforms actually cite. Your page can rank #1 on Google and never appear in a ChatGPT response. Or rank nowhere on Google and get cited repeatedly by Perplexity.

This gap reflects a real difference in how these systems find content.

Google uses lexical search - matching keywords in your query to keywords on pages, weighted by links, authority signals, and hundreds of ranking factors. AI systems use semantic search - matching the meaning of a question to content that answers it, regardless of keyword density or backlink profiles. They blend keyword matching (BM25) with vector embeddings through Reciprocal Rank Fusion (RRF) to build their citation lists.

Key Takeaways

- Google rankings and AI citations overlap only 13-25%. Ranking #1 on Google says almost nothing about your AI visibility.

- Binary mention tracking produces noise. Weighted rank scoring fixes this.

- Track each AI provider separately - they cite completely different sources for the same query.

- AI-referred visitors convert 5-9x better than organic search. Start measuring now.

How big is the divergence?

| Platform Pair | Citation Overlap | What It Means |

|---|---|---|

| ChatGPT - Perplexity | 25% | Highest overlap among major AI platforms |

| Google AI Overviews - ChatGPT | 21% | Moderate divergence |

| Google AI Overviews - Perplexity | 19% | Significant divergence |

| Google AI Overviews - AI Mode | 14% | Google's own AI systems disagree with each other |

| Bing Copilot - ChatGPT | 14% | Lowest overlap between major platforms |

Source: SE Ranking study, 2,000 queries. SEO Clarity found only 19% of 12,011 AI Mode citations came from Google's top-20 organic rankings.

Even within Google, their AI Overviews and AI Mode agree on sources only 13.7% of the time across 730,000 responses. Two AI products from the same company, answering the same questions, citing different pages.

![]()

The SVR/UAVR/RCC framework

Duane Forrester (formerly Bing, now at Gradient Group) proposed three diagnostic metrics to quantify this divergence:

- SVR (Shared Visibility Rate): What percentage of AI-cited URLs also appear in Google's top 10. SVR > 0.6 means your content works for both systems. SVR < 0.3 means AI trusts other sources.

- UAVR (Unique Assistant Visibility Rate): What percentage of AI citations come from outside Google's top 10. High UAVR means AI is finding content Google doesn't surface.

- RCC (Repeat Citation Count): How often a domain gets cited across different queries. A proxy for semantic authority.

These are directionally useful. They help you understand whether your content aligns with both search paradigms or only one. But they're one strategist's diagnostic framework, not independently validated benchmarks. The thresholds (SVR > 0.6 = "good") are informed suggestions, not peer-reviewed standards.

And they don't address the harder problem: the measurement itself is broken.

What Goes Wrong When You Track AI Visibility?

Most AI visibility tracking produces noise that looks like signal. The core issue isn't which metrics you pick. It's that LLM outputs are probabilistic, and standard measurement approaches treat them like they're deterministic. They're not.

The binary counting trap

The simplest approach to measuring AI visibility is binary: for each query, check if your brand appears in the AI response. Mentioned = 1, not mentioned = 0. Add them up, divide by total queries, get a percentage.

This throws away the most useful information you have. A rank #1 strong endorsement ("We highly recommend this tool for competitive analysis") counts the same as a rank #8 afterthought where your brand is barely listed among 10 alternatives.

It gets worse with small sample sizes. With 9 queries (3 prompts across 3 AI providers), you get exactly 10 possible scores: 0%, 11%, 22%, 33%... up to 100%. A "22% drop" sounds alarming. It means 2 out of 9 queries flipped. That's Tuesday for an LLM.

Point-to-point comparison fires on every check

Compare today's score to yesterday's. If the difference exceeds a threshold, fire an alert. Sounds reasonable.

I built this exact system for CompetLab's AI visibility tracking. Here's what happened during the first week of daily checks on one of our tracked projects:

| Day | Visibility | Alert | What Actually Happened |

|---|---|---|---|

| Feb 9 | 89% to 67% | "Dropped from 89% to 67%" - CRITICAL | Normal LLM variance |

| Feb 10 | 67% to 89% | "Improved from 67% to 89%" | Bounced right back |

| Feb 11 | 89% to 67% | "Dropped from 89% to 67%" | Same oscillation |

| Feb 13 | 67% to 44% | "Dropped from 67% to 44%" | 2 queries stopped citing |

| Feb 14 | 44% to 22% | "Dropped to 22%" - CRITICAL | Maybe real... or more noise? |

Five alerts in five days. The "top competitor" flip-flopped between two domains daily. Every morning I opened the dashboard to another CRITICAL alert that meant nothing.

![]()

This isn't a CompetLab-specific problem. AirOps research found the same pattern at scale: only 30% of brands stayed visible from one AI answer to the next. Just 20% held presence across five consecutive runs of the same query.

Citation hallucination

Even when you do get cited, the citation might be fabricated. A Tow Center for Digital Journalism study (late 2025) found 60%+ of AI citations across eight platforms were incorrect or misleading:

- GPT-4o: 66% of citations fabricated or contain errors

- Grok 3: 94% inaccuracy rate

- Perplexity: 37% error rate

These numbers will improve as models update, but the structural problem remains - citation accuracy varies wildly across providers.

If a domain gets cited repeatedly (high RCC in Forrester's framework), it might reflect consistent hallucination patterns rather than genuine semantic authority.

Prompt variability

Minor rephrasing of the same question causes 30-50% divergence in LLM outputs. "Best CRM for small teams" and "top CRM tools for startups" can produce completely different citation lists from the same AI platform.

Single-query testing doesn't measure anything useful. You need query clusters - 10-20 variations of a core concept - to get signal through the noise.

What Actually Works for Measuring AI Visibility?

We spent two weeks building, testing, and throwing away measurement approaches at CompetLab before landing on something that actually works. The short version: weighted rank scoring instead of binary counting, trend confirmation instead of point-to-point comparison, and per-provider tracking instead of one aggregate number.

Weighted rank scoring

We tested three weight curves on our production data (6 checks over 2 weeks) and ran Monte Carlo simulations - randomized tests that generate thousands of fake-but-realistic data patterns - across 7 scenarios: stable brands, gradual declines, sudden crashes, noisy oscillation, recoveries. 2,100 simulated check sequences total.

The curve that worked best:

| Rank | Score | Why |

|---|---|---|

| #1 | 1.00 | Top recommendation |

| #2 | 0.85 | Strong second position |

| #3 | 0.70 | Still prominent |

| #4 | 0.50 | Sharp drop - most users stop reading here |

| #5 | 0.40 | Diminishing returns |

| #6+ | 0.25 | Present but minimal impact |

| Not found | 0 | Not cited |

Why steep at the top? Positions #1-3 capture most user attention in AI responses, similar to how the top 3 organic results capture 60%+ of clicks in traditional search.

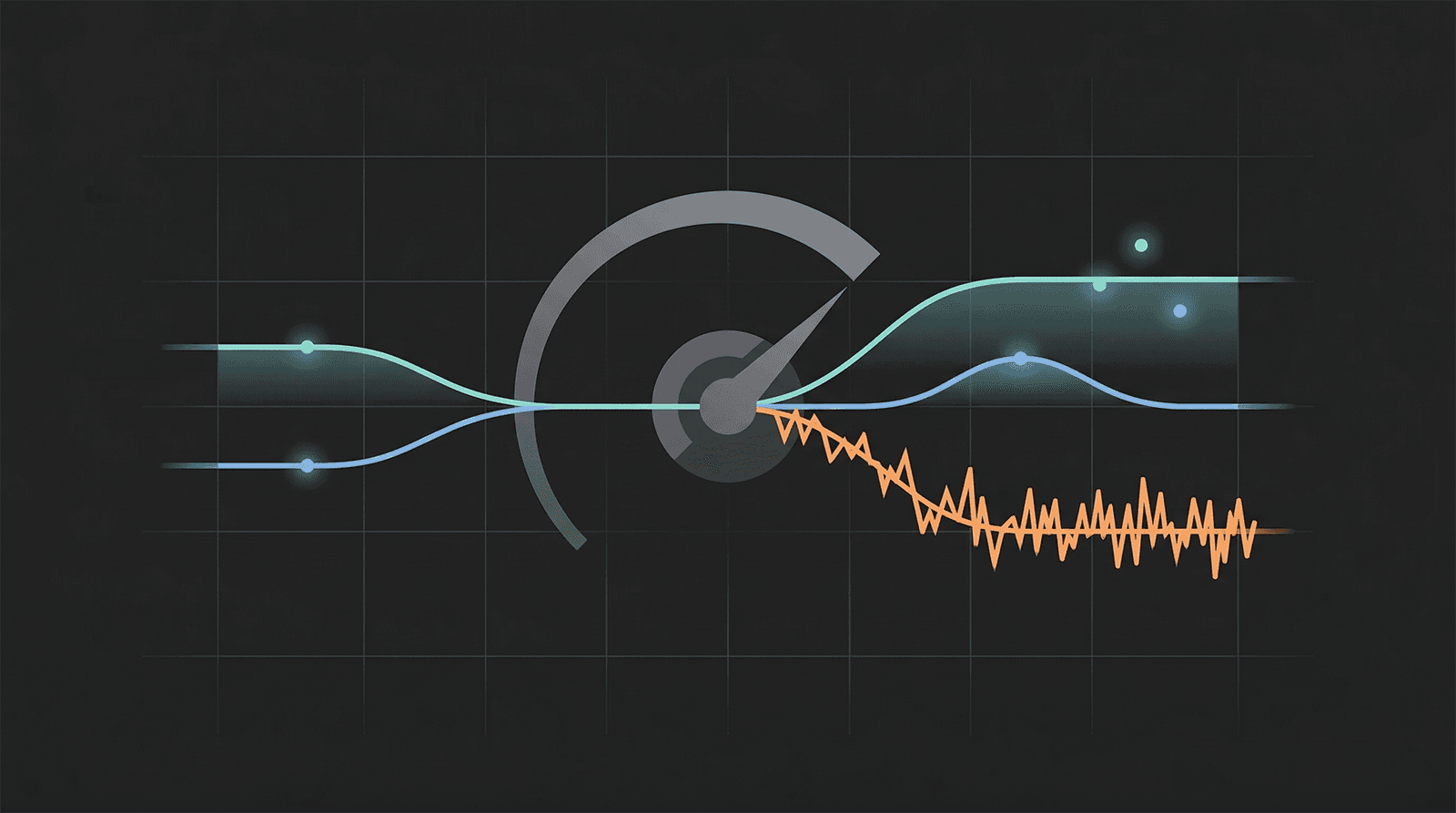

In practice, this changes everything. Small rank shuffles (#2 to #3) produce small score changes (0.85 to 0.70) instead of binary flips (1 to 0). That oscillation pattern from our production data? Average score swing dropped from 22 points per check with binary counting to 13 points with weighted scoring. Almost half the noise gone, just by preserving rank information.

![]()

Trend confirmation over snapshots

Weighted scoring reduces noise but doesn't eliminate it. You still need to separate real trends from random walk.

The approach that tested best: require two consecutive score movements in the same direction, each above a minimum threshold. If check A drops 15+ points to check B, and check B drops 15+ points to check C, that's confirmed. If check A drops but check B bounces back, it's noise - suppress it.

Why it works:

- Oscillation (A to B to A): Deltas move in opposite directions. Suppressed.

- Real decline: Both deltas negative and significant. Fires.

- Gradual drift: Small same-direction deltas don't reach threshold. Silent until meaningful.

Our simulation results: 95%+ accuracy with under 1% false positive rate on oscillating data. Point-to-point comparison? 52-70% false positives on the same oscillating data.

The trade-off is a 3-5 day detection delay (needs 2 consecutive drops to confirm at 2-3 day check intervals). Worth it. I'd rather get one real alert per week than five fake ones per day.

Per-provider tracking

ChatGPT, Claude, and Gemini aren't slightly different. They have fundamentally different citation philosophies:

- ChatGPT: Favors authoritative knowledge bases. Wikipedia-heavy. Prefers established, well-structured content.

- Perplexity: Prioritizes community discussions and recency. Reddit-concentrated, with a 2-3 day content decay.

- Google AI Overviews: Balanced approach across professional and social platforms.

One aggregate "AI visibility score" hides all of this. You can be invisible on ChatGPT and well-cited on Perplexity, or the reverse. We track each provider separately in CompetLab because the optimization strategy is different for each one.

Honestly, most AI visibility tools on the market still show you a single blended number. That's like combining your Google ranking and your Bing ranking into one score and calling it your "search visibility." Nobody does that. Same logic applies here.

Why bother? The business case.

AI referral traffic is small right now - roughly 1% of total web traffic as of early 2026. But the conversion numbers change the math. Analysis of 12 million visits across 350+ businesses:

| Traffic Source | Conversion Rate |

|---|---|

| Claude | 16.8% |

| ChatGPT | 14.2% |

| Perplexity | 12.4% |

| Google Organic | 2.8% |

Source: Seer Interactive and RankScience studies.

AI-sourced customers show 67% higher lifetime value and 73% lower cancellation rates. Makes sense - someone who trusted an AI recommendation enough to click already did their evaluation inside the conversation. They show up pre-sold.

A 5-9x conversion multiplier on a growing channel is worth tracking even at 1% of total traffic.

![]()

How Do You Set Up AI Visibility Tracking Today?

Start with manual tracking across 25-50 high-intent queries tested weekly on ChatGPT, Perplexity, Claude, and Google AI Overviews. Graduate to tools when manual becomes unsustainable. Baseline data matters more than precision right now.

Manual method

Step 1: Define queries across four intent categories:

- Awareness: "what is [your category]"

- Consideration: "best [category] for [use case]"

- Comparison: "[your brand] vs [competitor]"

- Decision: "[your brand] reviews" or "is [brand] worth it"

Step 2: Test each query on each platform. Log: date, query, platform, cited (yes/no), rank position, sentiment (recommended/mentioned/negative), URL cited.

Step 3: Calculate your mention rate: queries mentioning you divided by total queries. Industry leaders target 30-50% for high-intent queries. If you're a startup, 5-10% is normal and fine - track it over time, not against enterprise benchmarks.

Step 4: Run weekly. Monthly misses real shifts. Daily burns resources for no extra signal.

Track AI referral traffic in GA4

Most AI-referred visits show up as "direct" because users copy-paste URLs from AI responses instead of clicking. Set up a custom channel group to capture what you can:

Create an "AI Assistants" channel in GA4 (Admin - Data display - Channel groups) with this regex on Session source:

(chatgpt\.com|chat\.openai\.com|perplexity\.ai|claude\.ai|gemini\.google\.com|copilot\.microsoft\.com)This won't catch everything. The "dark traffic problem" means real AI-referred visits are probably several times higher than what shows in analytics. But it gives you a baseline.

Tool options by budget

| Tier | Tools | Price | Best For |

|---|---|---|---|

| Starter | Otterly, Hall AI | $0-29/mo | Solo marketers, initial monitoring |

| Mid-market | Peec, Rankscale, SE Ranking | $50-200/mo | Agencies, 10-100 tracked prompts |

| Enterprise | Scrunch AI, Profound AI | $300-1,500+/mo | Large brands, compliance |

| SEO-integrated | Semrush AI Toolkit | Add-on | Teams already using Semrush |

One thing to watch: some tools query AI platforms through APIs, others scrape the actual web interface. API-based tools can produce results that differ by 24% from what real users see. Ask your tool vendor which method they use before trusting the numbers.

![]()

Minimum viable setup

- Pick 25 high-intent queries relevant to your category

- Test manually across ChatGPT, Perplexity, and Google AI Overviews

- Log results in a spreadsheet (date, query, platform, cited, rank, sentiment)

- Set up the GA4 channel group above

- Repeat weekly for 4-6 weeks until you have enough data to spot trends

- Graduate to a paid tool when the spreadsheet gets painful

What to Do Next

If you're not tracking AI visibility yet, start this week. Pick 10-25 queries, test them on ChatGPT and Perplexity, log results. Set up the GA4 channel group. That's your baseline.

If you're already tracking but drowning in noise, switch from binary counting to weighted rank scoring and stop reacting to single-check swings. Require confirmed trends before acting.

This is what we built CompetLab to do - track AI visibility across ChatGPT, Claude, and Gemini with weighted ranking, per-provider breakdowns, and competitive gap analysis. We built it because we hit every measurement problem described in this article and needed to solve them for ourselves first.

Read next: How ChatGPT Searches Differently Than Google - the retrieval mechanics behind the divergence data in this article.

Frequently Asked Questions

How often should you check AI visibility?

Weekly. Monthly misses shifts. Daily creates noise you'll waste time reacting to. If you use an automated tool, let it collect daily but review the data weekly.

Does schema markup improve AI citations?

Mixed evidence. FAQ schema shows a marginal edge in some studies. But a SearchAtlas study across OpenAI, Gemini, and Perplexity found "domains with complete schema coverage perform no better than minimal or no schema." Implement it because it's good practice. Don't expect it to flip a switch.

What's a good AI mention rate?

Industry leaders average 30-50% for high-intent queries. Emerging brands start at 5-10%. The more useful number is your mention rate relative to your top 3 competitors, tracked over time. If the gap is shrinking week over week, you're on the right track.

Does AI visibility actually drive traffic?

Small volume, high quality. About 1% of total web traffic as of early 2026, but 5-9x better conversion rates. One risk: restructuring content purely for AI extraction has been documented to drop Google rankings from position 3 to position 9. Track both, optimize for both, sacrifice neither.

Share this article