How AI Answer Engines Actually Select Your Content

How Do AI Answer Engines Find and Select Content?

You rank on Google. You have backlinks. Your domain authority is solid. But when someone asks ChatGPT or Perplexity about your topic, your content doesn't get mentioned. A smaller competitor with a weaker domain shows up instead.

Key Takeaways

- 92-96% of AI Overview sources rank outside Google's top 20. SEO ranking alone won't get you cited.

- Content structure beats domain authority. FAQ schema pages are 3.2x more likely to be cited.

- Each AI platform favors different source types. Optimizing for "AI" generically wastes effort.

- Rewrite your top 10 pages answer-first, add FAQ schema, and test each platform separately.

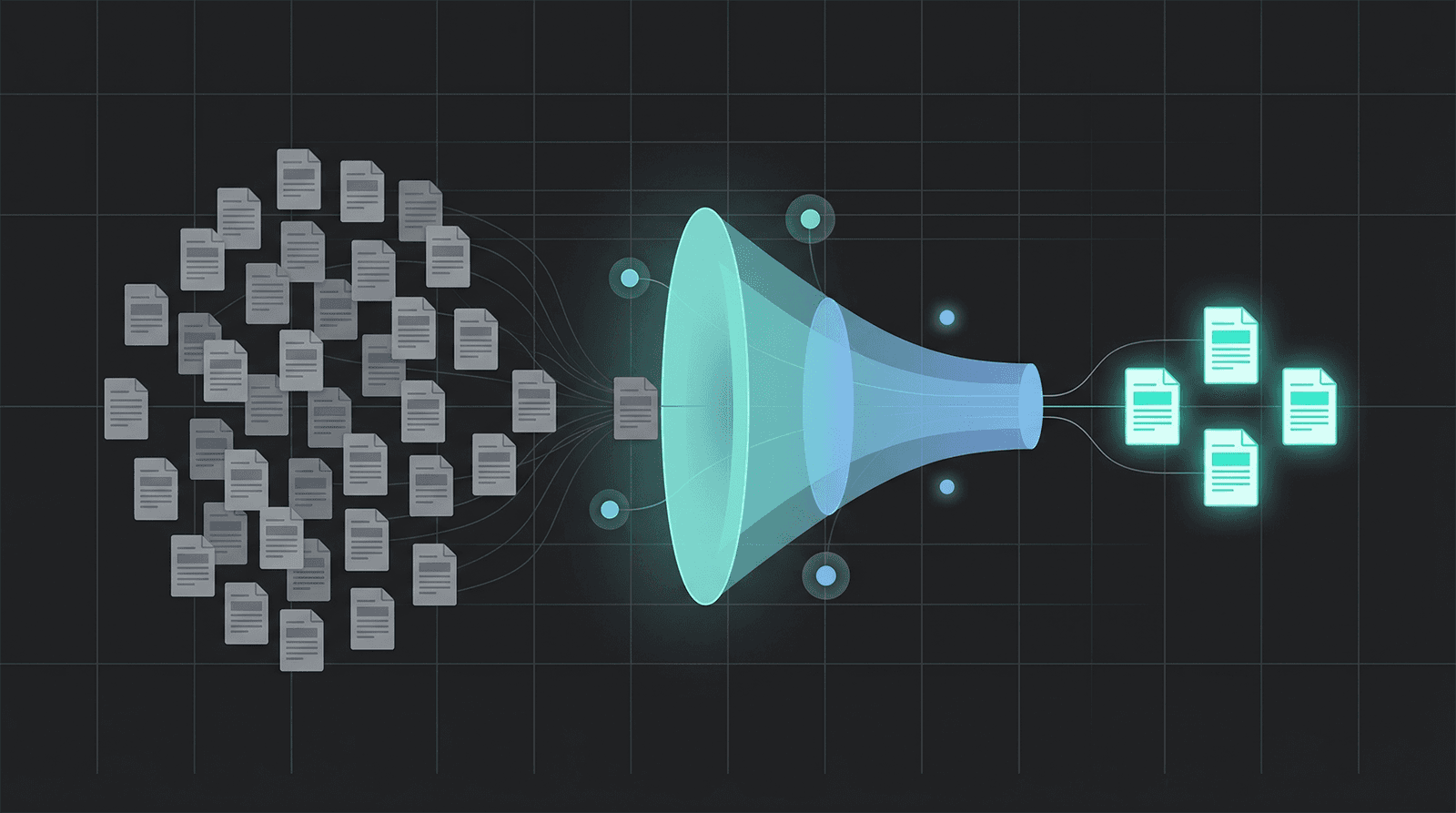

The problem isn't your SEO. It's that AI answer engines select content differently than Google ranks it. They use a two-stage pipeline: first, a retrieval system pulls hundreds of candidate pages using keyword matching (BM25) and semantic similarity (vector embeddings). Then a re-ranking model scores those candidates on answer quality, clarity, and extractability to pick the handful that appear in the final response.

Think of it like a job interview. The resume screen (retrieval) gets you into the building. The actual interview (re-ranking) decides if you get hired.

The retrieval stage: getting into the candidate pool

Stage one combines two methods. Lexical retrieval (BM25) matches exact keywords and phrases in your content against the query. Semantic retrieval uses vector embeddings to find conceptually related content even when the exact words differ.

Both methods contribute roughly equally to the candidate pool. Perplexity uses a three-layer system that starts with initial retrieval, applies XGBoost quality scoring, then runs recency and credibility gates. Google AI Overviews draw from the same index that powers organic search, pulling 76-92% of cited pages from the top 100 organic results.

If your page doesn't match the query lexically or semantically, it never enters the candidate pool. This is where traditional keyword strategy and semantic coverage still matter.

The re-ranking stage: where winners are decided

Re-ranking is where the real selection happens. Cross-encoder models evaluate each candidate page against the query with much deeper analysis than the initial retrieval pass. Research shows cross-encoders deliver +33-42% accuracy improvement over retrieval-only scoring.

This stage evaluates how directly your content answers the question, how extractable your key points are, and how clearly information is structured. A well-structured page with a mediocre domain rating will beat a high-authority page with buried answers.

A useful mental model (not a formula)

One framework describes AI content selection as four weighted factors: lexical match (~40%), semantic match (~40%), re-ranking (~15%), and clarity signals (~5%). This comes from Duane Forrester's research and is directionally useful.

But treat these as a mental model, not fixed weights. Validation research shows AI engines use dynamic, query-adaptive weighting - the balance shifts based on query type, topic, and available sources. The important insight is structural: retrieval gets you in the door, re-ranking decides the winner.

![]()

Why Does Traditional SEO Ranking Fail for AI Visibility?

Ranking on page one of Google does not guarantee AI citation. A Profound study of 30M+ citations (2025) found that 92-96% of sources cited in Google AI Overviews rank outside the traditional top 20. Position alone is not the gate.

This breaks a core assumption most SEO strategies depend on: that ranking higher means more visibility everywhere. For AI answer engines, it doesn't. And honestly, I think most SEO teams still haven't internalized this.

![]()

What AI engines look for instead

When multiple sources cover the same topic, AI engines apply a conflict resolution hierarchy. The ranking, based on cross-platform analysis: credibility first, then recency, then consensus among sources, then contextual relevance, then citation density.

Notice what's missing from that list: domain rating, backlink count, and organic position. These factors help with retrieval (getting into the candidate pool), but they carry little weight in re-ranking.

What actually drives backlink value for AI visibility is different from traditional link building. The correlation between link authority and AI citation is weaker and more threshold-based than in Google organic search.

The Heatable vs Aira example

Matt Diggity's AI Overviews case study illustrates this perfectly. For a query about heat pump cold-weather performance:

- Heatable (higher-ranking) used playful copy: "When the Earth goes cold, we're likely to start seeing pigs fly" and "you could say they're more down-to-earth."

- Aira (lower-ranking) led with a direct answer: "Heat pumps work exactly the same way in winter as they do in summer," followed by a clean technical explanation.

Google's AI Overview ignored Heatable and the other top organic results. It cited Aira's lower-ranking but clearer, more extractable content instead.

This is re-ranking in action. Aira's page gave the AI a clean, quotable answer. Heatable's page made the AI work to extract facts from metaphors.

What Content Structures Get Cited Most by AI?

Pages with answer-first structure, FAQ schema, and comparison tables get cited significantly more than narrative-style content. The data across multiple large-scale studies is consistent: structure is the strongest predictor of AI citation, stronger than domain authority or content length.

The structural elements that matter (ranked by impact)

FAQ schema: 3.2x citation lift. Pages with FAQ schema are 3.2x more likely to appear in AI Overviews than similar content without it. This is the single highest-impact structural change across the research. FAQPage has the highest AI citation rate among all schema types - beating Article, HowTo, Product, and Review.

Answer capsules: 72.4% correlation. Direct answers placed immediately after headings - what researchers call "answer capsules" - correlate with 72.4% of AI citations. The pattern: H2 question heading, then a 40-80 word direct answer, then supporting detail.

Comparison tables: +47% higher citation rates. When you present comparative data in tables instead of paragraphs, AI engines can extract and reuse that information directly. Passionfruit's analysis of over 1M AI summaries (2025) confirms structured formats are extracted more often because they present discrete, machine-parsable information.

Short paragraphs (1-3 sentences): +32%. Dense prose blocks are harder for AI to extract clean answers from. Short, self-contained paragraphs give AI engines clean boundaries to quote.

Question-based H2 headers: +25%. Headers phrased as questions ("What is X?", "How does Y work?") mirror the prompt-to-answer structure LLMs use, making your content more likely to match the response format they generate.

![]()

Content length barely matters

This one surprises people. Ahrefs analyzed 174,000 pages cited in AI Overviews (2025) and found the correlation between word count and being cited is 0.04 - essentially zero. 53.4% of cited pages are under 1,000 words.

The average cited page is 1,282 words. Very short pages (under 350 words) and long pages (over 2,000 words) both get cited. What matters is not how long the page is, but whether each section delivers a clean, extractable answer in 75-150 words.

Why documentation beats marketing copy

Documentation-style content gets cited far more than marketing-style blogs across every platform studied. Numbers from Passionfruit's 2025 cross-AI analysis:

| Content Type | Citation Multiplier vs Baseline |

|---|---|

| Government pages (.gov) | 11.75x |

| eCommerce product pages | 5.10x |

| Support documentation | 3.43x |

| News and media | 2.56x |

| User-generated content | 1.42x |

Why the gap? Documentation starts with answers. Marketing starts with stories.

A product doc opens with "To configure X, set parameter Y to Z." A marketing blog opens with "In today's competitive landscape, configuring X has become increasingly important for organizations seeking to..." By the time the blog reaches the answer, the AI has already found a cleaner source.

This doesn't mean you should write your blog like API docs. It means your key information should be accessible in the first 100-200 words of each section, not buried after narrative setup. AI crawlers timeout after 1-5 seconds - answer-first content gets extracted before that window closes.

How Does Each AI Platform Choose Different Sources?

ChatGPT, Perplexity, Claude, Google AI Overviews, and Gemini each pull from different source types - and the differences are bigger than most people expect. Only about 11% of domains are cited by both ChatGPT and Perplexity (2026 Averi benchmark). Treating "AI optimization" as a single strategy means missing 89% of the picture.

Platform-by-platform breakdown

ChatGPT leans heavily on product pages and documentation for B2B queries - 60.1% of citations go to product pages, feature docs, and official company pages. For general queries, Wikipedia dominates at 47.9% of top cited domains (2025 Cybernews analysis). Blog content gets only about 10.5% of citations.

ChatGPT indexes through Bing and its own GPTBot crawler, so make sure your robots.txt allows both.

Perplexity is the opposite. Reddit is the #1 source at 46.7% of top citations - and Wikipedia gets literally 0% in B2B datasets. Perplexity cites YouTube at 16.1% and runs 2.8x more citations per answer than ChatGPT. It strongly favors long Reddit answers (300+ words) over short comments.

Claude favors blog content at 43.8% of citations - nearly 4x ChatGPT's blog rate. Wikipedia is almost absent (0.1%). Claude draws from company blogs, market research firms, and vendor documentation. If you write explanatory, research-backed content, Claude is your most receptive platform.

Google AI Overviews pull 38% of citations from blog and editorial content, with Reddit at 21% and news at 23%. The strongest lever here is FAQ schema - pages with FAQ schema are 3.2x more likely to appear. AIO shares Google's main index, so organic ranking still helps with retrieval even if it's not sufficient for citation.

Gemini has YouTube as its #1 cited domain - and it cites lower-view videos (median ~4,394 views) more than ChatGPT does (median ~8,991 views). For B2B, Gemini favors official company websites, docs, and support centers.

![]()

What this means for your strategy

| Platform | Primary Source Type | Key Lever | What Wins |

|---|---|---|---|

| ChatGPT | Product docs, Wikipedia | Bing indexing + GPTBot access | Structured docs, definition pages, data hubs |

| Perplexity | Reddit, YouTube | Fresh UGC + long-form answers | Authentic community presence, 300+ word answers |

| Claude | Blogs, research | Explanatory depth | Research-backed explainer content, safety-aware framing |

| Google AIO | Blogs, Reddit, news | FAQ schema (3.2x lift) | Schema markup + top-100 organic ranking |

| Gemini | YouTube, official docs | Video content + Google ecosystem | Educational videos with timestamps, clean docs |

Your content mix predicts your AI platform fit

We noticed this building CompetLab's Content Intelligence feature, which categorizes competitor content into types - blog posts, documentation, tools, landing pages, case studies, comparison pages, integrations. When we run it on a typical B2B SaaS, the content mix is almost always blog-dominant. One project we analyzed: 97% blog posts, with a single documentation page, one free tool, one case study.

That mix is well-suited for Claude (43.8% blog preference) but leaves ChatGPT almost entirely on the table (60.1% product docs preference). Most founders don't even realize this imbalance exists because they've never mapped their content types against AI platform preferences.

If your site is 90%+ blog posts, you're probably visible on Claude and invisible on ChatGPT. If you're heavy on docs but have no community presence, Perplexity won't cite you. Content type diversity isn't just a competitive intelligence signal - it's an AI visibility strategy.

The divergence data gets more extreme within Google's own products. Google AI Overviews and Google AI Mode have only 13.7% citation overlap between them. Same company, same index, different citation patterns.

What Proof Exists That AI Content Optimization Actually Works?

Multiple documented case studies show measurable ROI from AI content optimization - from small B2B SaaS companies to enterprise manufacturers. The results are consistent: answer-first rewrites, FAQ schema, and E-E-A-T signals drive citation gains within weeks to months.

Success stories with numbers

B2B SaaS: 5% to 90% visibility in 6 months. A bootstrapped project management SaaS (12 employees) tracked AI visibility across 50 high-value queries. Baseline: mentioned in 2-3 of 50 queries (5%).

What they changed: answer-first rewrites on 32 articles, FAQ schema with 8-15 Q&As per page, named author bios with Person schema, page speed improvements (3.2s to 1.1s). Visibility hit 90% by month 6. AI-referred leads had 42% higher close rates than organic search leads. Investment: $37K over 6 months. Estimated first-year revenue from AI-sourced customers: $230K.

Industrial manufacturer: +2,300% AI referral traffic. The Search Initiative documented a B2B manufacturer that rewrote informational pages answer-first, added TL;DR blocks, tightened heading hierarchy, and implemented Article/FAQ/HowTo schema. Result: 2,300% year-over-year growth in AI referral traffic (ChatGPT, Gemini, AI Overviews combined) and went from 0 to 90 keywords cited in AI Overviews.

Kip&Co: AI citations in 4 weeks. Australian lifestyle brand rolled out Article + FAQ schema to 120 existing content pieces. Within four weeks, pages with schema started appearing in AI platform citations while similar pages without schema stayed invisible.

Mid-market site: +700% AI referral growth. Search Logistics documented a site that upgraded trust signals (author bios, NAP data), restructured content for AI readability (direct copy, hierarchical headings, TL;DR blocks), and added FAQ/Article/Organization schema. Result: 700%+ increase in AI referral traffic and 157 AI Overviews citations.

What failed (and what to avoid)

The same LLMScout study documented failures that are just as instructive:

- Keyword stuffing for LLMs: Two articles with artificially increased brand/keyword frequency (20-30% higher density) stopped getting cited entirely. Citations returned only after removing the extra repetitions.

- Thin "AI bait" content: Five 500-700 word articles on basic questions, targeted as easy wins, earned zero AI citations across months of testing. Meanwhile, 1,500-2,500 word structured content on the same topics got cited consistently.

- SPA migration: An edtech site rebuilt with React SPA became "visually stunning, performance-grade fast - and utterly invisible in search." No unique URLs, no sitemaps, no structured data. AI crawlers and LLMs could not access the content at all.

The takeaway: LLMs appear to use quality filters as strict as Google's. If you wouldn't try it on Google in 2026, don't try it on AI platforms either.

The honest picture on AI traffic ROI

AI traffic converts differently depending on your vertical:

| Metric | B2B (Seer Interactive) | eCommerce (973-site study) |

|---|---|---|

| AI traffic share | 0.07% of organic | 0.2% of sessions |

| ChatGPT conversion rate | 15.9% | Below organic average |

| Google organic conversion rate | 1.76% | Higher than ChatGPT |

| Session duration vs organic | 68% longer (SE Ranking) | Not reported |

Source: Seer Interactive, Search Engine Land, SE Ranking

B2B brands see 5-9x higher conversions from AI traffic. E-commerce does not - at least not yet. AI traffic overall grew 7x year-over-year through 2025 and visitors spend 68% more time on site than organic search visitors.

The volume is small today. The trajectory isn't. I'd rather figure out this channel now while the competition is thin than scramble later when everyone's optimizing for it.

What Should You Do First?

Start with the five changes that have the strongest evidence behind them. These are ordered by impact-to-effort ratio based on the case studies above.

Step 1: Rewrite your top pages answer-first

Pick your 10 highest-traffic pages. For each H2 section, put the direct answer in the first 1-2 sentences (40-80 words). Follow with supporting evidence. Keep each section self-contained at 75-150 words so AI engines can extract it cleanly.

Before: "Remote work has transformed how teams collaborate. With the rise of distributed teams across the globe, finding the right communication tools has become more critical than ever. In this comprehensive guide, we'll explore..."

After: "The best communication tools for remote teams in 2025 are Slack (best for instant messaging), Zoom (best for video), and Loom (best for async video). Here's a detailed comparison based on team size, budget, and use case..."

![]()

The LLMScout case study showed this single change - applied across 32 articles - was the primary driver of going from 5% to 18% AI visibility in just two months.

Step 2: Add FAQ schema to high-intent pages

Add 5-10 real Q&As per page, pulled from customer support tickets, sales calls, and Reddit threads. Wrap them in validated FAQPage JSON-LD. The questions must match visible content on the page - schema that doesn't match what users see gets ignored or penalized.

Use a single, consolidated FAQPage block per URL. Multiple FAQ blocks from plugins cause validation errors. Test with Google's Rich Results Test before publishing.

Step 3: Strengthen E-E-A-T signals

Replace "Team" or "Admin" bylines with named authors. Add 150-200 word bios with credentials, job title, and LinkedIn link. Implement Person schema. Link to external talks, publications, or interviews.

This is not vanity. The B2B SaaS case study showed E-E-A-T signals were one of three changes (alongside answer-first rewrites and schema) that drove the 5% to 90% visibility shift.

Step 4: Increase content freshness cadence

76.4% of ChatGPT's most-cited pages were updated in the last 30 days (as of 2025 Ahrefs data). Content older than 12 months is roughly 50% less likely to be cited. But changing just the date doesn't work - AI engines track semantic change (new sections, updated numbers, revised examples), not timestamps.

For high-value pages: update quarterly with real editorial passes. For data-heavy content: update when the underlying numbers change. For evergreen guides: annual review with fresh examples.

Step 5: Test across platforms separately

Manual testing protocol: pick 20-50 queries relevant to your business. Test each in ChatGPT, Perplexity, Claude, and Google (for AI Overviews). Record which queries return your content and on which platform. Repeat weekly or biweekly.

This is how the B2B SaaS tracked their 5% to 90% progress. It's also how they discovered that Claude preferred their long explanatory content, ChatGPT preferred their stats and comparison pages, and Perplexity rewarded their freshest updates.

I'll be honest - manual testing is how we started at CompetLab too. It works until you hit 30+ queries across 3+ platforms and need to track week-over-week trends for yourself and competitors. That's when we automated it. CompetLab's AI visibility tracking runs this per-platform breakdown automatically so you can see which content types get cited where - and whether your answer-first rewrites are actually moving the needle.

What NOT to do

- Don't keyword-stuff for LLMs. It kills citations, just like it kills Google rankings.

- Don't create thin "AI bait" content. 500-word articles targeting easy questions earned zero citations in controlled testing.

- Don't treat AI as one platform. ChatGPT and Perplexity share only 11% of cited domains. Optimizing for "AI" generically wastes effort.

- Don't forget technical access. If your content requires JavaScript rendering, most AI crawlers can't see it. Server-side rendering is a prerequisite, not an optimization.

Read next: How ChatGPT Searches Differently Than Google - the retrieval mechanics behind the divergence data in this article.

Related: Link Building for AI Visibility | Lazy Loading and AI Visibility

Frequently Asked Questions

Does traditional SEO still matter for AI visibility?

Yes - more than most "AI SEO" vendors want to admit. About 70% of pages cited in AI Overviews also rank in the top 10 organically. Organic ranking gets you into the candidate pool. AI optimization wins the re-ranking stage. You need both.

How long before AI content optimization shows results?

FAQ schema: 2-4 weeks (Kip&Co saw citations within a month of adding schema to 120 pages). Answer-first rewrites: 4-8 weeks for early gains. Full programs compound over 3-6 months - the B2B SaaS case hit 18% at month 2, 52% at month 4, 90% at month 6.

Is it worth optimizing for AI when it drives less than 1% of traffic?

For B2B, yes. AI referrals convert 5-10x better than organic, and traffic grew 7x year-over-year through 2025. Early movers build compounding advantages - the B2B SaaS that reached 90% visibility now gets cited in nearly every relevant AI query, making it harder for competitors to displace them.

For e-commerce, the case is weaker. ChatGPT referrals currently convert below organic rates. I'd still track it, but I wouldn't restructure my content strategy around it yet.

Should I optimize for all AI platforms or focus on one?

Start where your audience is. B2B: ChatGPT (60% product docs) and Claude (44% blogs). Consumer: Perplexity (Reddit-heavy) and Google AI Overviews (FAQ schema is the strongest lever). Expand from there.

Can AI optimization hurt my Google rankings?

Every documented case study - LLMScout, Search Initiative, Search Logistics - reported improved organic rankings alongside AI visibility gains. The Search Initiative client went from 808 to 1,295 keywords in Google's top 10 while gaining 2,300% AI referral growth. Google's own guidance: "AI features use the same core signals as search." The optimizations help both channels.

Share this article